BTN.com staff, November 30, 2015

In science fiction, the presence of machines that can learn and adapt like their human creators generally indicates something bad is going to happen. Think of Skynet from the Terminator films, the Replicants from Blade Runner or HAL 9000 from 2001: A Space Odyssey.

In real life, having the capacity to teach robots tasks that go beyond their original programming and configuration would be a welcome development. That capability is still largely in the realm of science fiction, but a team of professors and researchers in Maryland?s Autonomy, Robotics and Cognition Laboratory are working to change that.

?There are quite a few robots today in the world, but they are not that intelligent,? said Yiannis Aloimonos, a professor of computer science with an appointment in the Maryland Institute for Advanced Computer Studies. ?So if we can increase their intelligence a little bit and get them to do some basic tasks, we think that is going to create a revolution in the near future.?

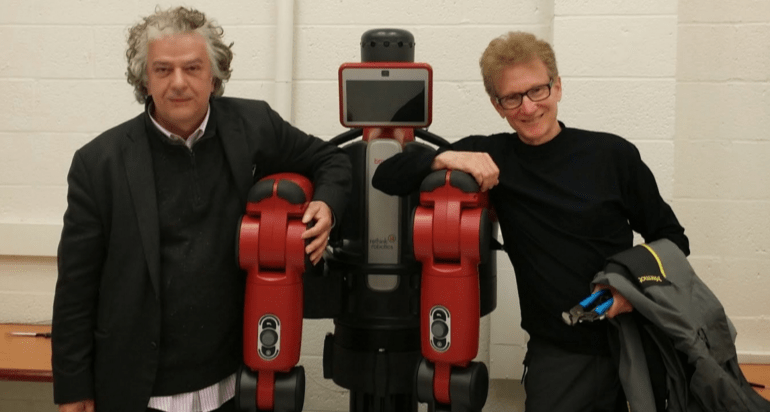

The work, which is being spearheaded by Aloimonos and assistant research scientist Cornelia Fermüller, combines the disciplines of artificial intelligence, computer vision and control theory. Aloimonos? background is in mathematics and computer vision - specifically, how robots can use it to perform tasks like navigation.

Fermüller also specializes in computer vision, primarily aspects related to motion processing and visual psychology, as well as applied mathematics.

The researchers are taking a novel approach to teaching the robots. Instead of simply programming in the individual, sequential steps of a task, the researchers are using a method inspired by the concept of parsing a sentence. The robots ?understand? what the end result should be and are shown video footage of the process, which is then absorbed by the system.

?The robot has to have a certain vocabulary of actions,? Fermüller said. ?How does he put two things together? How does he break [them] apart? And while he?s doing this, he?s constantly running geometric processes, self-recognizing the objects and recognizing the places.?

[btn-post-package]Though the robots still need to be told to perform the given task, they?re capable of doing it even if the necessary components are out of order. In practical terms, that ability could allow companies to move from high-volume/low-mix manufacturing operations to a more varied production system, according to Aloimonos and Fermüller.

While the immediate impact is on robots, Aloimonos pointed out that the broader inspiration for his team?s work is to examine patterns of human behavior and explore how we learn from that.

?What we are hoping to accomplish is to understand much better the processes that are involved in human action,? he explained. ?Humans do things on a day-to-day basis, and if we understand how to recognize what they are doing, we would be able to learn and repeat it.?

By Grant Rindner

Basketball is back! Find available live games on our B1G+ app via BigTenPlus.com.

Basketball is back! Find available live games on our B1G+ app via BigTenPlus.com.